Imitation Learning for Autonomous Robotic Surgery

A walkthrough a Johns Hopkins University and Stanford University research using the Da Vinci robotic surgery platform

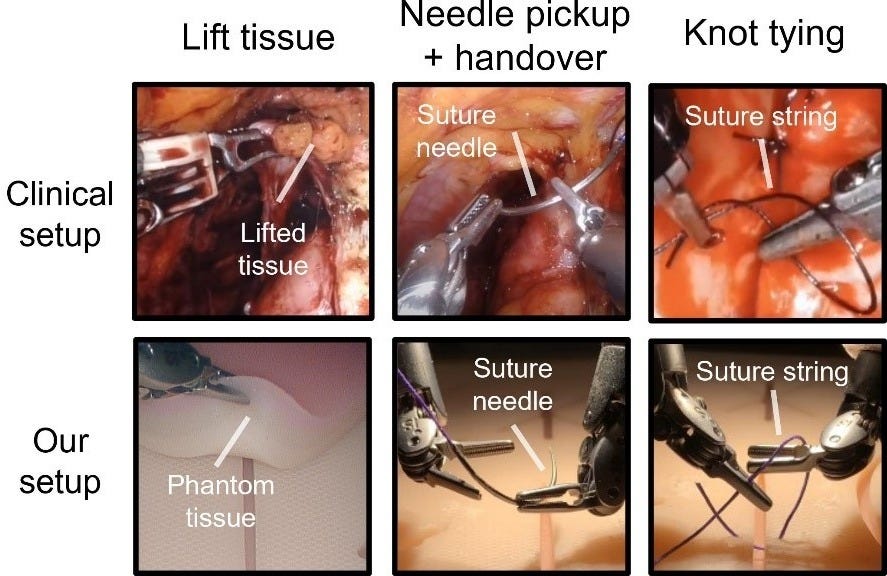

Researchers from Johns Hopkins University and Stanford have recently trained AI models to control a surgical robot, the Da Vinci Surgical Research Kit from Intuitive Surgical, to autonomously perform three tasks:

- Tissue lift

- Needle pick and handover

- Knot tying

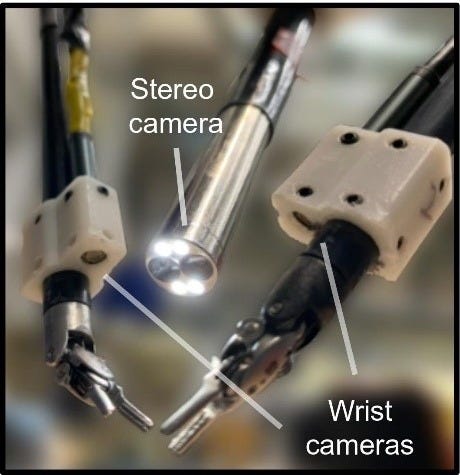

The Da Vinci Surgical Research Kit features an endoscopic camera manipulator and two patient-side manipulators. For this study, wrist cameras were added to the patient-side manipulators.

The Study At A Glance

Action Representation

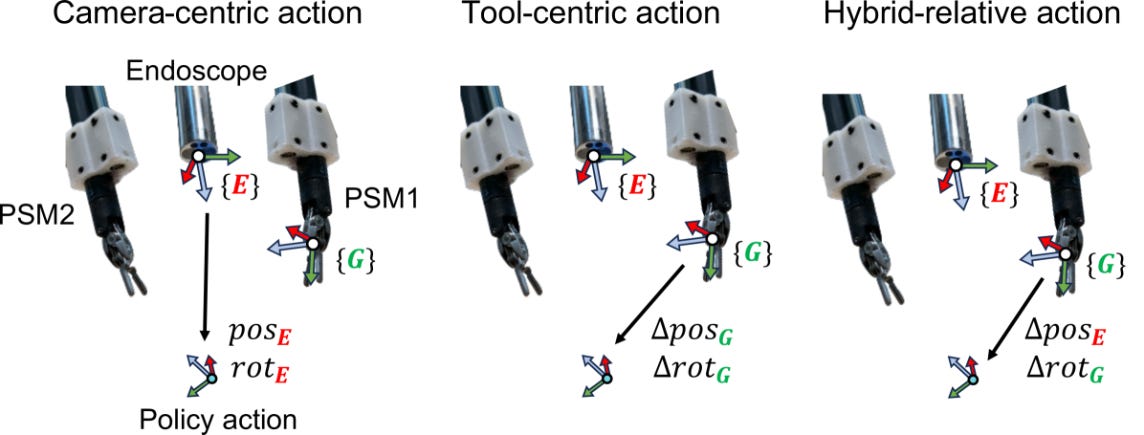

The main challenge was imprecise measurements of the manipulators' position. To address this, the researchers tested different techniques to represent the actions taken:

- Camera-centric: Actions are modelled as absolute positions of the end-effectors with respect to the endoscope tip.

- Tool-centric: Actions are modelled as relative motion with respect to the current end-effector frame.

- Hybrid-relative: Actions are modelled as relative motion with respect to the endoscope tip frame for translations and the current end-effector frame for rotations.

AI models

Two AI model architectures were used:

- Action Transformers (ACT)

- Diffusion Policy.

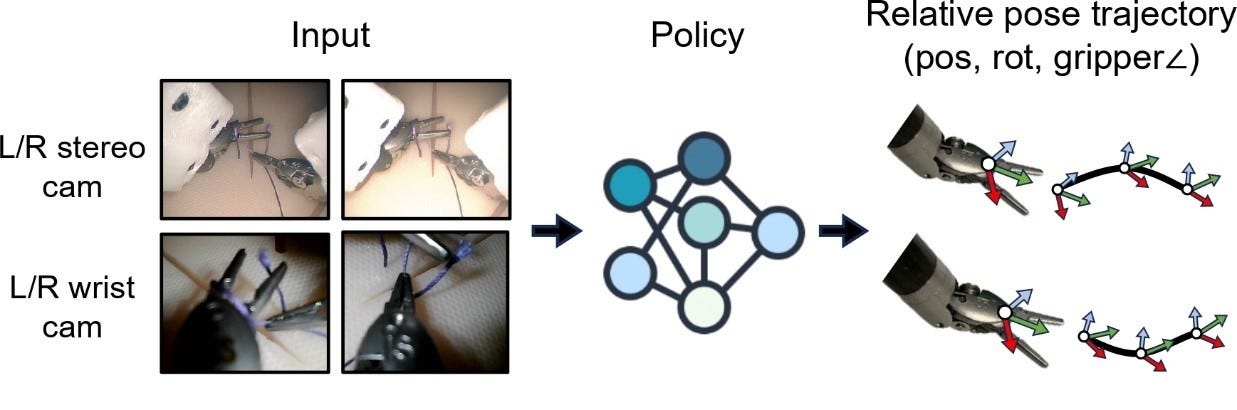

The input was images from cameras, including wrist cameras, and the outputs included the end-effector position or position change, orientation or orientation change, and jaw angle for both arms.

Training process

The training dataset comprised 224 trials for tissue lift, 250 trials for needle manipulation and transfer, and 500 trials for knot-tying. All these trials were conducted by a single user, utilizing a clinical phantom and a dome-shaped simulator designed to replicate the human abdomen.

Results

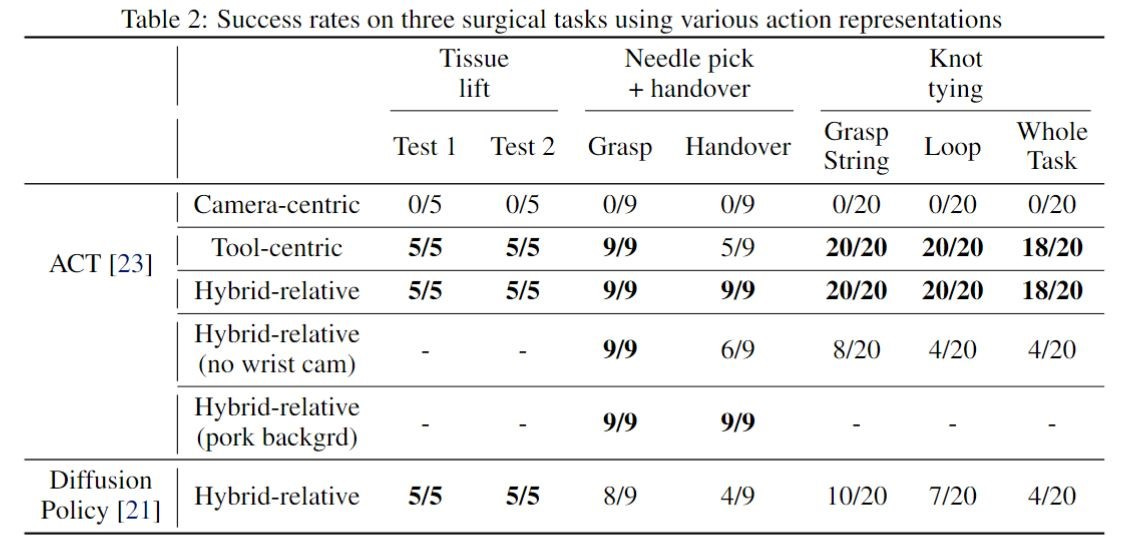

The results showed that policies trained using relative action formulations (tool-centric and hybrid-relative) performed well, while policies trained using absolute forward kinematics failed. The researchers also observed that wrist cameras significantly improved policy performance, especially during phases requiring precise depth estimation.

A series of videos showing successful policy rollouts by the hybrid-relative action formulation have been published, including examples of zero-shot generalization where the clinical phantom is replaced by chicken, pork or a 3D pad.

To Go Further

If you wish to go beyond this overview, the complete paper can be found at https://arxiv.org/html/2407.12998v1

Additionally, the website https://surgical-robot-transformer.github.io offers additional information about the project.

Note: All images and tables in this article are sourced from https://surgical-robot-transformer.github.io and https://arxiv.org/html/2407.12998v1#A1, unless otherwise indicated as the original work of the author.